The system could enable robot arms to be more precise and versatile in, for example, picking up, assembling, and packaging items on assembly lines. The researchers believe that it could replace computer vision in some tasks.

An RFID tag is attached to the object to be tracked. A reader sends a wireless signal that reflects off the tag and other nearby objects. An algorithm sifts through the reflected signals to find the RFID tag’s response. The tag’s movement is used to improve the localisation accuracy.

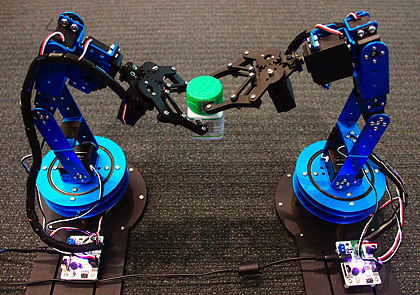

To validate the system, the researchers attached one RFID tag to a bottle and another to a bottlecap. A robot arm was able to locate the cap and place it onto the bottle, held by a second robot.

The researchers believe that the RFID system could overcome some of the drawbacks of machine vision, such as its limitations when viewing objects in cluttered environments. RF signals have no such restrictions; they can identify targets even in cluttered environments and through walls.

“If you use RF signals for tasks typically done using computer vision, not only do you enable robots to do human things, but you can also enable them to do superhuman things,” says Fadel Adib, an assistant professor and principal investigator in the MIT Media Lab, and founding director of the Signal Kinetics Research Group. “And you can do it in a scalable way, because these RFID tags are only 3 cents each.”

Although there have been other attempts to use RFID tags for localisation, they usually involve trade-offs in either accuracy or speed. To be accurate, it may take them several seconds to find a moving object; to increase speed, they lose accuracy.

The challenge for the MIT researchers was to achieve both speed and accuracy simultaneously. To do so, they drew inspiration from an imaging technique called “super-resolution imaging” in which images are stitched together from multiple angles to achieve a fine-resolution image.

“The idea was to apply these super-resolution systems to radio signals,” Adib explains. “As something moves, you get more perspectives in tracking it, so you can exploit the movement for accuracy.”

The system combines a standard RFID reader with a “helper” component that’s used to localise the RF signals. It shoots out a wideband signal containing multiple frequencies, building on a modulation scheme used in wireless communication, called orthogonal frequency-division multiplexing.

The system captures the signals rebounding off any objects in the environment, including the RFID tag. The signal that’s specific to the tag reflects and absorb an incoming signal in a pattern that the system can recognise.

In a test of their RFID-based location system, the MIT researchers used it to place a cap on a bottle held by a second robot

Because the signals travel at the speed of light, the system can compute a “time of flight” – measuring a distance by calculating the time it takes for a signal to travel between a transmitter and receiver – to gauge the location of the tag, as well as the other objects. This provides a ballpark localisation figure.

To zoom in on the tag’s location, the researchers have developed what they call a “space-time super-resolution” algorithm, which combines the location estimations for all rebounding signals, including the RFID signal. Using probability calculations, it narrows this down to a handful of potential locations for the RFID tag.

As the tag moves, its signal angle varies slightly – a change that also corresponds to a certain location. The algorithm can use the change in angle to track the tag’s distance as it moves. By constantly comparing the changing distance to distance measurements from other signals, it can find the tag in a 3D space in a fraction of a second.

“By combining these measurements over time and over space, you get a better reconstruction of the tag’s position,” Adib explains.

Another potential application for the technology is with small “nanodrones” used in search-and-rescue missions. These drones currently use computer vision for localisation, and often get confused in chaotic areas, lose each other behind walls, and can’t identify each other. This limits their ability to spread out over an area and collaborate to search for a missing person, for example. Using the MIT system, swarms of drones could locate each other for greater control and collaboration.

“You could enable a swarm of nanodrones to form in certain ways, fly into cluttered environments, and even environments hidden from sight, with great precision,” says Zhihong Luo, a graduate student in the Signal Kinetics Research Group.

In a demonstration, the MIT researchers tracked RFID-equipped nanodrones during docking, manoeuvring and flying operations. The system was as accurate and fast as traditional computer-vision systems, while working in areas where computer vision would fail.

MIT’s work was sponsored, in part, by the US National Science Foundation.